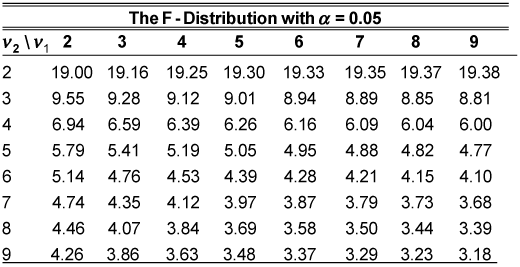

If this number is really small and our denominator is larger, that means that our variation within each sample, makes up more of the total variation than our variation between the samples. So if this number is really big, it should tell us that there is a lower probability that our null hypothesis is correct. So that should make us believe that there is a difference in the true population mean. That's if this numerator is much bigger than this denominator over here. If this number, the numerator, is much larger than the denominator, then that tells us that the variation in this data is due mostly to the differences between the actual means and its due less to the variation within the means. Now let's just think about what this is doing right here. Our F statistic is going to be the ratio of our Sum of Squares between the samples- Sum of Squares between divided by, our degrees of freedom between and this is sometimes called the mean squares between, MSB, that, divided by the Sum of Squares within, so that's what I had done up here, the SSW in blue, divided by the SSW divided by the degrees of freedom of the SSwithin, and that was m (n-1). But you can already start to think of it as the ratio of two Chi-squared distributions that may or may not have different degrees of freedom. So our F statistic which has an F distribution-and we won't go real deep into the details of the F distribution.

So we're going to define-we're going to assume our null hypothesis, and then we're going to come up with a statistic called the F statistic. And then essentially figure out, what are the chances of getting a certain statistic this extreme? And I haven't even defined what that statistic is. How can we test this hypothesis? So we're going to assume the null hypothesis, which is what we always do when we are hypothesis testing, we're going to assume our null hypothesis. If our alternate hypothesis is correct, then these means will not be all the same. The true population mean of the group that took food 1 will be the same as the group that took food 2, which will be the same as the group that took food 3. "It does." and the way of thinking about this quantitatively is that if it doesn't make a difference, the true population means of the groups will be the same. "food doesn't make a difference" and that my Alternate hypothesis is that it does. Let's say that my null hypothesis is that the means are the same. So let's do a little hypothesis test here. And my question is, are these equal? Because if they're not equal, that means that the type of food given does have some type of impact on how people perform on a test. So my question is "this" equal to "this" equal to the mean 3, the true population of mean 3.

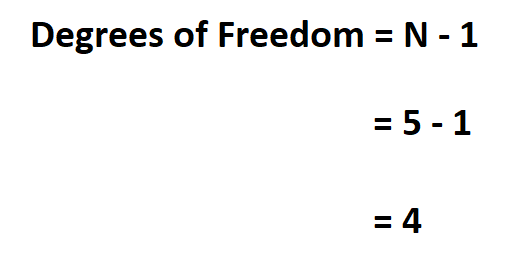

But there is some true mean there, it's just not really measurable. But if I knew the true population means- So my question is: Is the mean of the population of people taking Food 1 equal to the mean of Food 2? Obviously I'll never be able to give that food to every human being that could ever live and then make them all take an exam. But is that difference purely random? Random chance? Or can I be pretty confident that it's due to actual differences in the population means, of all of the people who would ever take food 3 vs food 2 vs food 1? So, my question here is, are the means and the true population means the same? This is a sample mean based on 3 samples. And I want to figure out if the type of food people take going into the test really affect their scores? If you look at these means, it looks like they perform best in group 3, than in group 2 or 1. So this is food 1, food 2, and then this over here is food 3. Let's say that I gave 3 different types of pills or 3 different types of food to people taking a test. We've been dealing with them abstractly right now, but you can imagine these are the results of some type of experiment. What I want to do is to put some context around these groups. What I want to do in this video, is actually use this type of information, essentially these statistics we've calculated, to do some inferential statistics, to come to some time of conclusion, or maybe not to come to some type of conclusion. And then the balance of this, 30, the balance of this variation, came from variation between the groups, and we calculated it, We got 24. Then we asked ourselves, how much of that variation is due to variation WITHIN each of these groups, versus variation BETWEEN the groups themselves? So, for the variation within the groups we have our Sum of Squares within. In the last couple of videos we first figured out the TOTAL variation in these 9 data points right here and we got 30, that's our Total Sum of Squares.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed